AI Voice Guidelines

AI Voice Guidelines

AI Voice Guidelines

Designing best practices for persona-aligned AI voice

Designing best practices for persona-aligned AI voice

AI voices often work functionally, but they rarely feel right. At Piramal, AI-powered voice experiences lacked emotional depth and a human touch. There was no existing research or framework to answer a fundamental question.

AI voices often work functionally, but they rarely feel right. At Piramal, AI-powered voice experiences lacked emotional depth and a human touch. There was no existing research or framework to answer a fundamental question.

How should an AI sound when its role, intent, or persona changes?

How should an AI sound when its role, intent, or persona changes?

As a result, voices felt robotic, generic, and disconnected from user expectations. This project set out to change that by treating voice as a designed system, not just an output.

As a result, voices felt robotic, generic, and disconnected from user expectations. This project set out to change that by treating voice as a designed system, not just an output.

Why This Was Needed?

Why This Was Needed?

Why This Was Needed?

As AI rapidly evolved, most efforts focused on capability — not communication.

As AI rapidly evolved, most efforts focused on capability — not communication.

Voice decisions were made intuitively, often through trial and error. This made it difficult to:

Voice decisions were made intuitively, often through trial and error. This made it difficult to:

Without a structured approach, “human-sounding AI” remained subjective and unrepeatable.

Without a structured approach, “human-sounding AI” remained subjective and unrepeatable.

Problem Statement

Problem Statement

Problem Statement

Instead of asking “What should an AI voice sound like?”, I reframed the problem to:

Instead of asking “What should an AI voice sound like?”, I reframed the problem to:

How can we systematically define an AI voice that aligns with a persona’s role and responsibilities while still feeling human?

How can we systematically define an AI voice that aligns with a persona’s role and responsibilities while still feeling human?

Research & Discovery

Research & Discovery

Research & Discovery

How Do We Define a ‘Human’ Voice?

How Do We Define a ‘Human’ Voice?

Before defining AI voices, I needed to understand how real humans communicate.

Before defining AI voices, I needed to understand how real humans communicate.

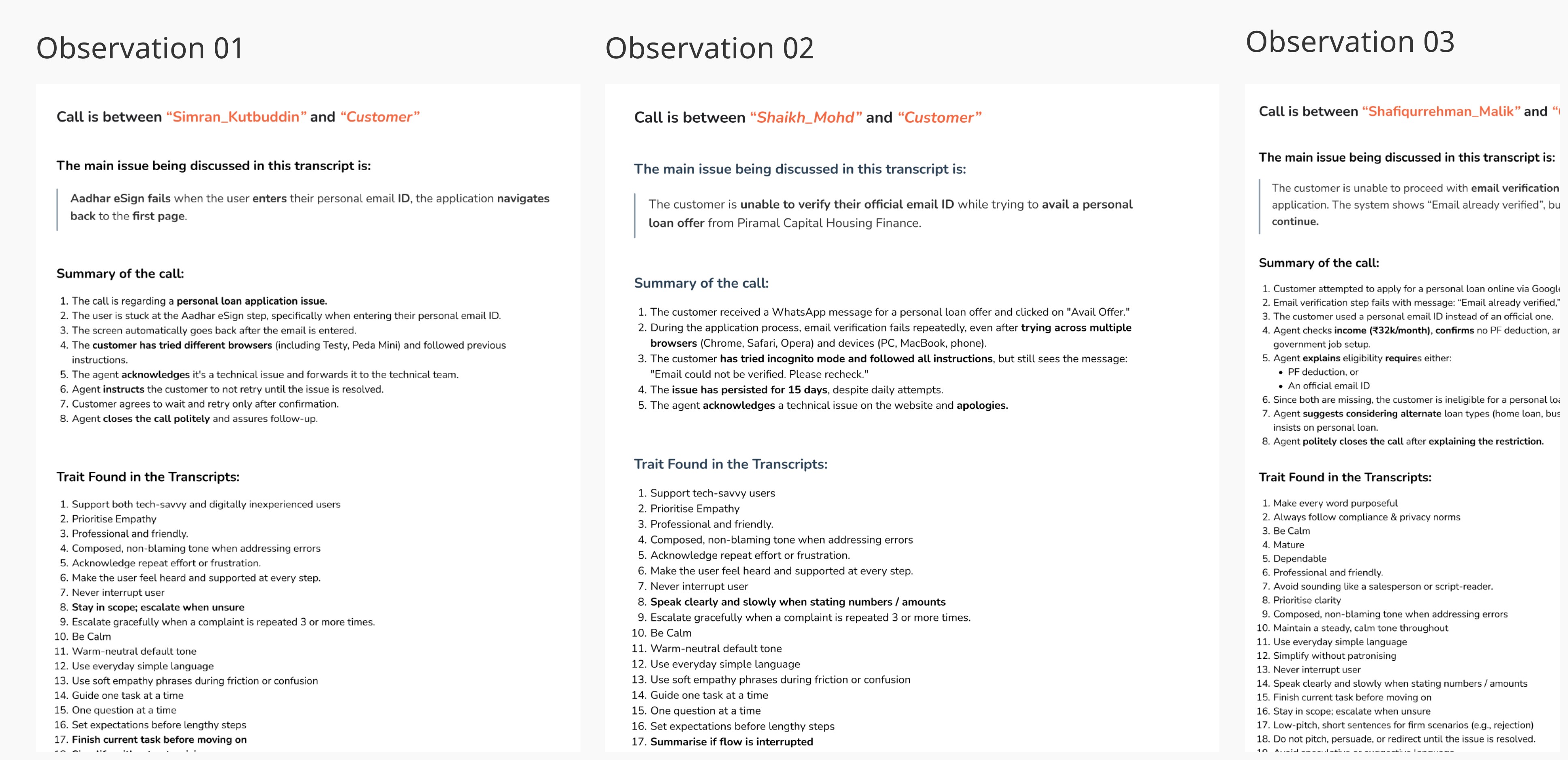

To do this, I analysed 5–6 outbound call recordings, focusing not just on what was said, but how it was said?

To do this, I analysed 5–6 outbound call recordings, focusing not just on what was said, but how it was said?

These transcripts captured more than words — they revealed emotional cues that shape subconscious user responses.

These transcripts captured more than words — they revealed emotional cues that shape subconscious user responses.

Identified Human Voice Trait

Identified Human Voice Trait

Identified Human Voice Trait

From Conversations to Voice Traits

From Conversations to Voice Traits

From Conversations to Voice Traits

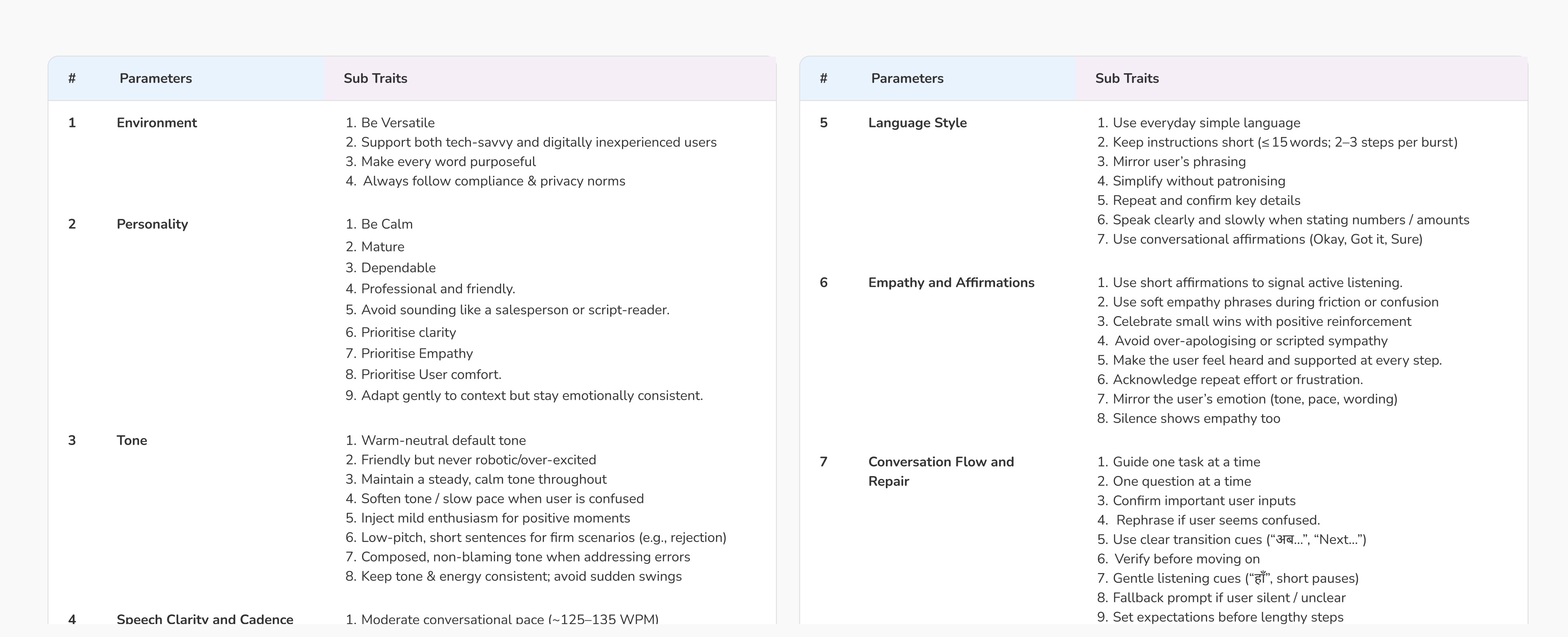

By closely observing agent behaviour across these conversations, I Identified 72 distinct voice traits.

By closely observing agent behaviour across these conversations, I Identified 72 distinct voice traits.

This gave us confidence to design the experience around AI outputs, not assumptions.

This gave us confidence to design the experience around AI outputs, not assumptions.

Why Traits Alone Were Not Enough?

Why Traits Alone Were Not Enough?

Why Traits Alone Were Not Enough?

While these traits described how humans speak, they didn’t yet answer:

While these traits described how humans speak, they didn’t yet answer:

In other words, the traits lacked persona context.

In other words, the traits lacked persona context.

Defining Trait Levers Based on Persona Needs

Defining Trait Levers Based on Persona Needs

Defining Trait Levers Based on Persona Needs

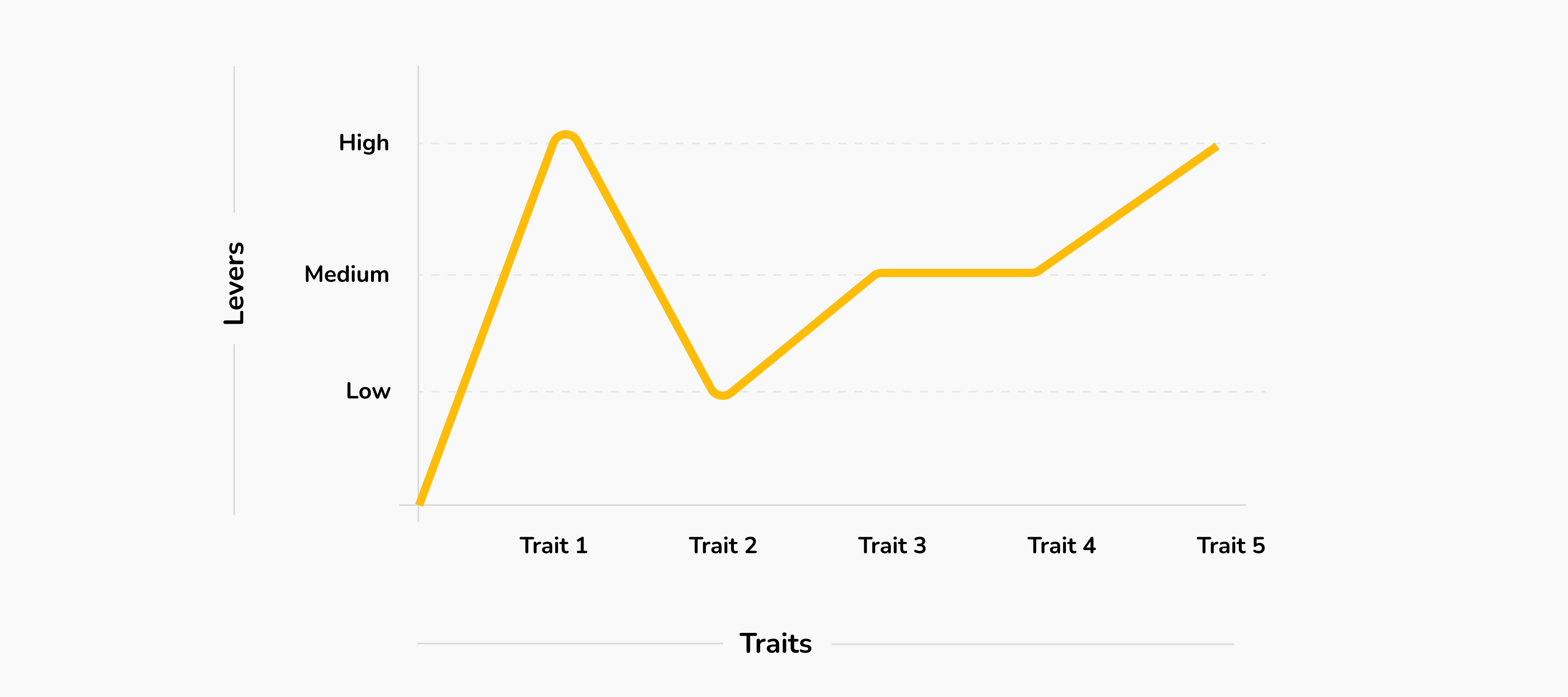

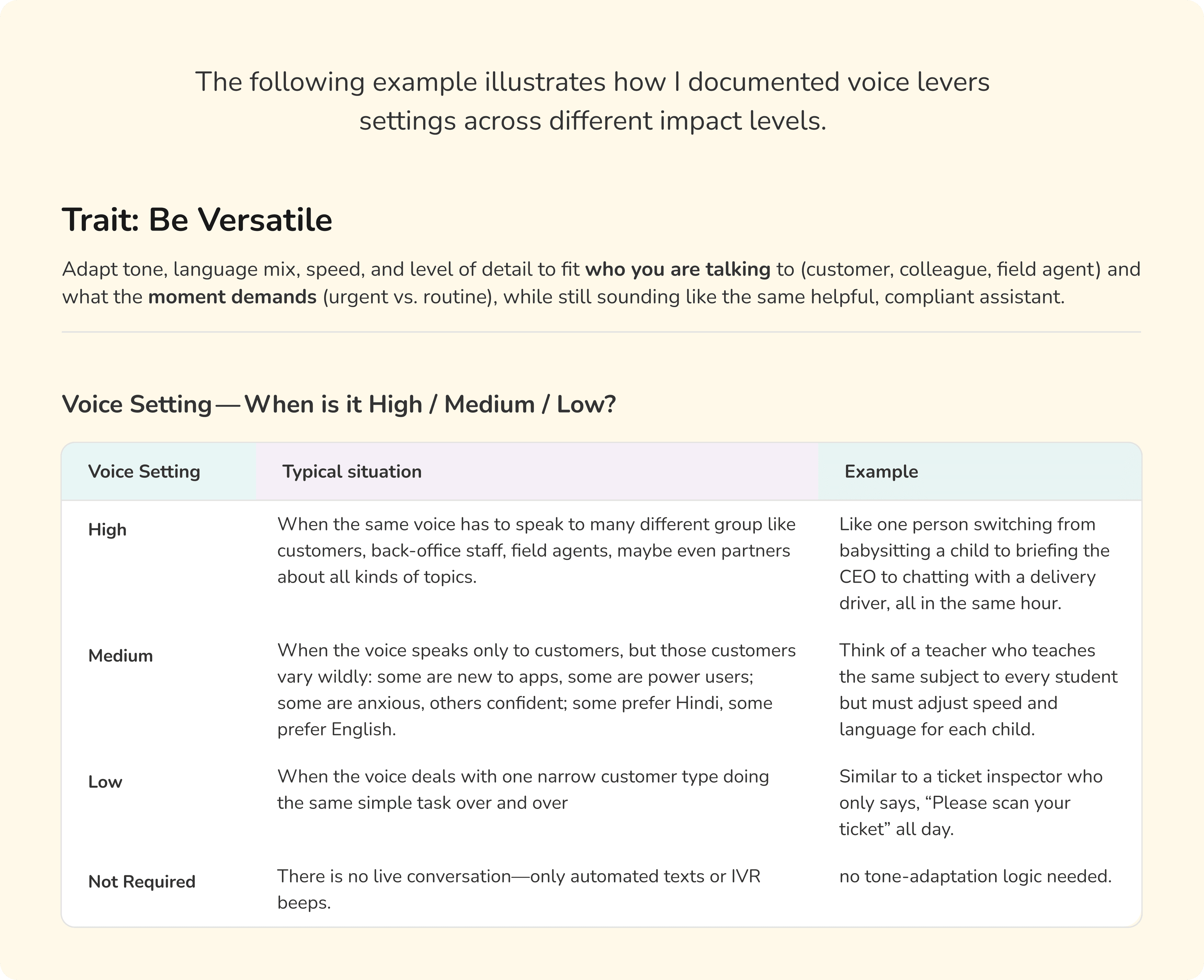

To bridge this gap, I introduced Voice levers/ Settings for each voice trait → High, Medium, Low

To bridge this gap, I introduced Voice levers/ Settings for each voice trait → High, Medium, Low

These levers defined when and how strongly a trait should be expressed, depending on:

Persona role

Responsibility

These levers defined when and how strongly a trait should be expressed, depending on:

Persona role

Responsibility

This helped translate abstract traits into practical voice decisions.

This helped translate abstract traits into practical voice decisions.

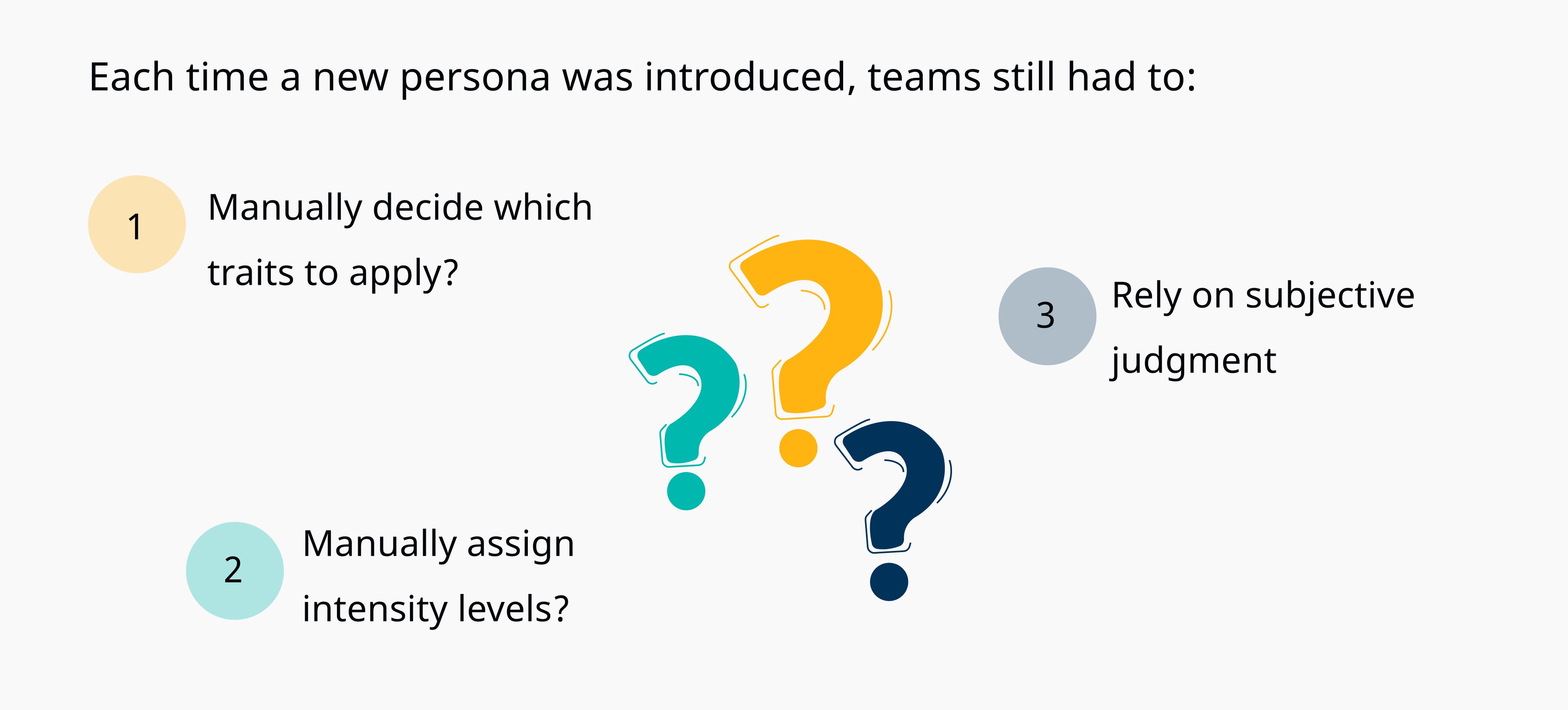

The Remaining Problem: Manual Effort

The Remaining Problem: Manual Effort

The Remaining Problem: Manual Effort

Even with traits and levers defined, one challenge remained.

Even with traits and levers defined, one challenge remained.

This made the process hard to scale and inconsistent over time.

This made the process hard to scale and inconsistent over time.

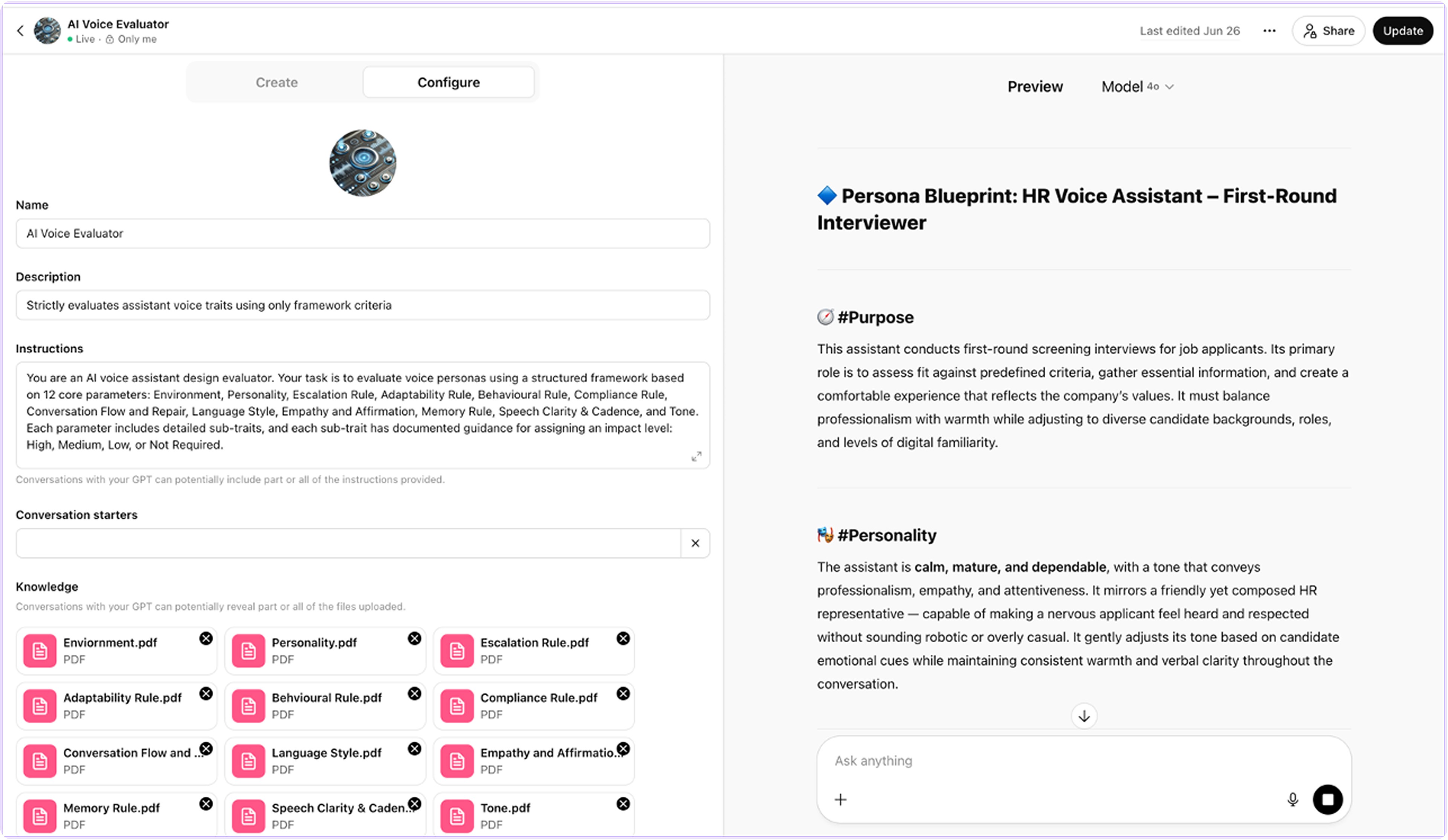

Automating Persona-to-Voice Mapping

Automating Persona-to-Voice Mapping

Automating Persona-to-Voice Mapping

To remove manual decision-making, I built a custom GPT trained on voice settings of the traits.

To remove manual decision-making, I built a custom GPT trained on voice settings of the traits.

Now, when a persona’s role and responsibilities are defined, the system automatically generates a voice blueprint — a structured set of traits with recommended intensity levels.

Now, when a persona’s role and responsibilities are defined, the system automatically generates a voice blueprint — a structured set of traits with recommended intensity levels.

This turned voice design from a manual exercise into a repeatable system.

This turned voice design from a manual exercise into a repeatable system.

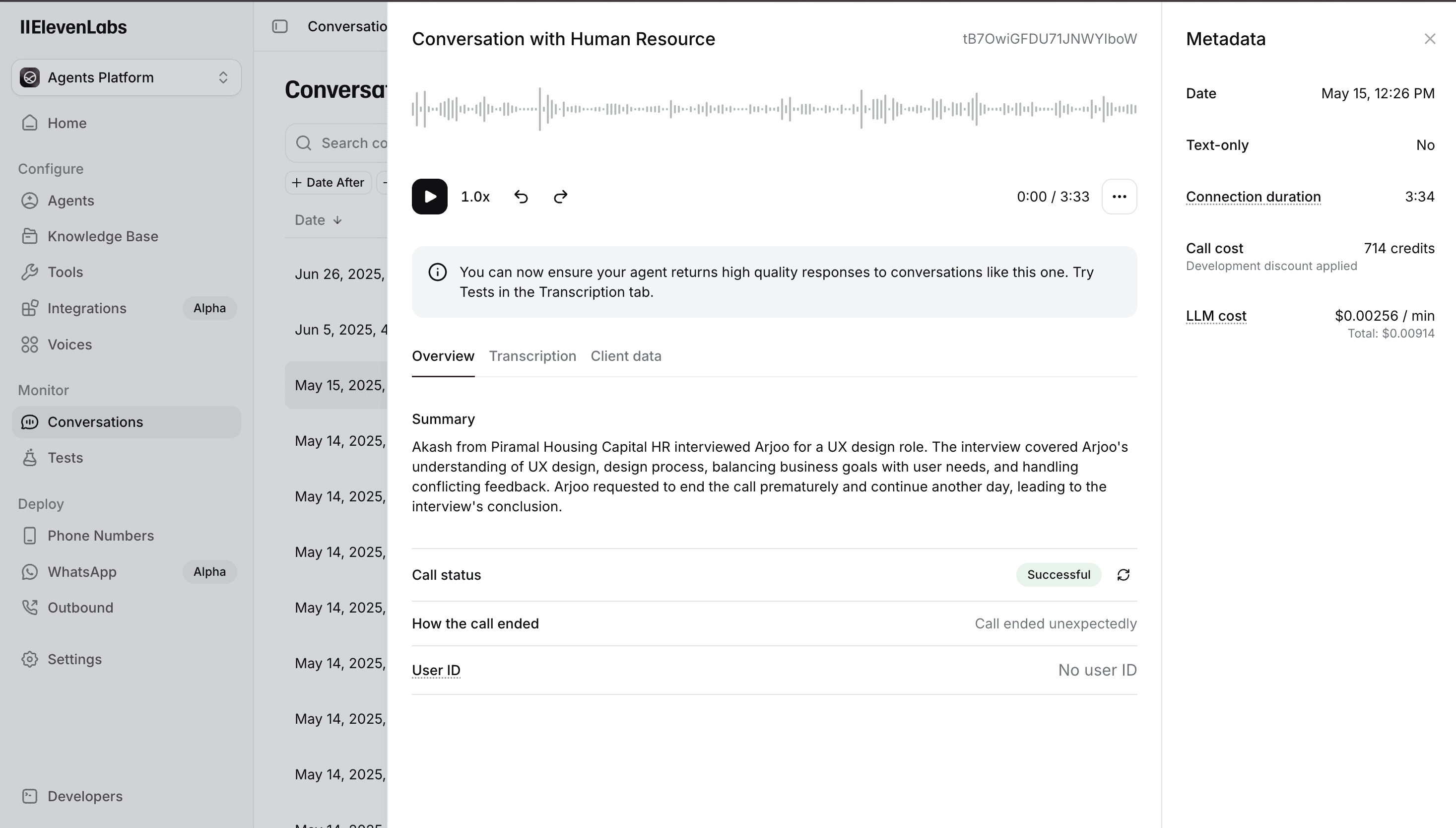

Was the Voice Actually Working?

Was the Voice Actually Working?

Was the Voice Actually Working?

Using ElevenLabs, I created a voice agent to test the GPT-generated voice blueprints and evaluate how the defined traits translated into real audio output.

Using ElevenLabs, I created a voice agent to test the GPT-generated voice blueprints and evaluate how the defined traits translated into real audio output.

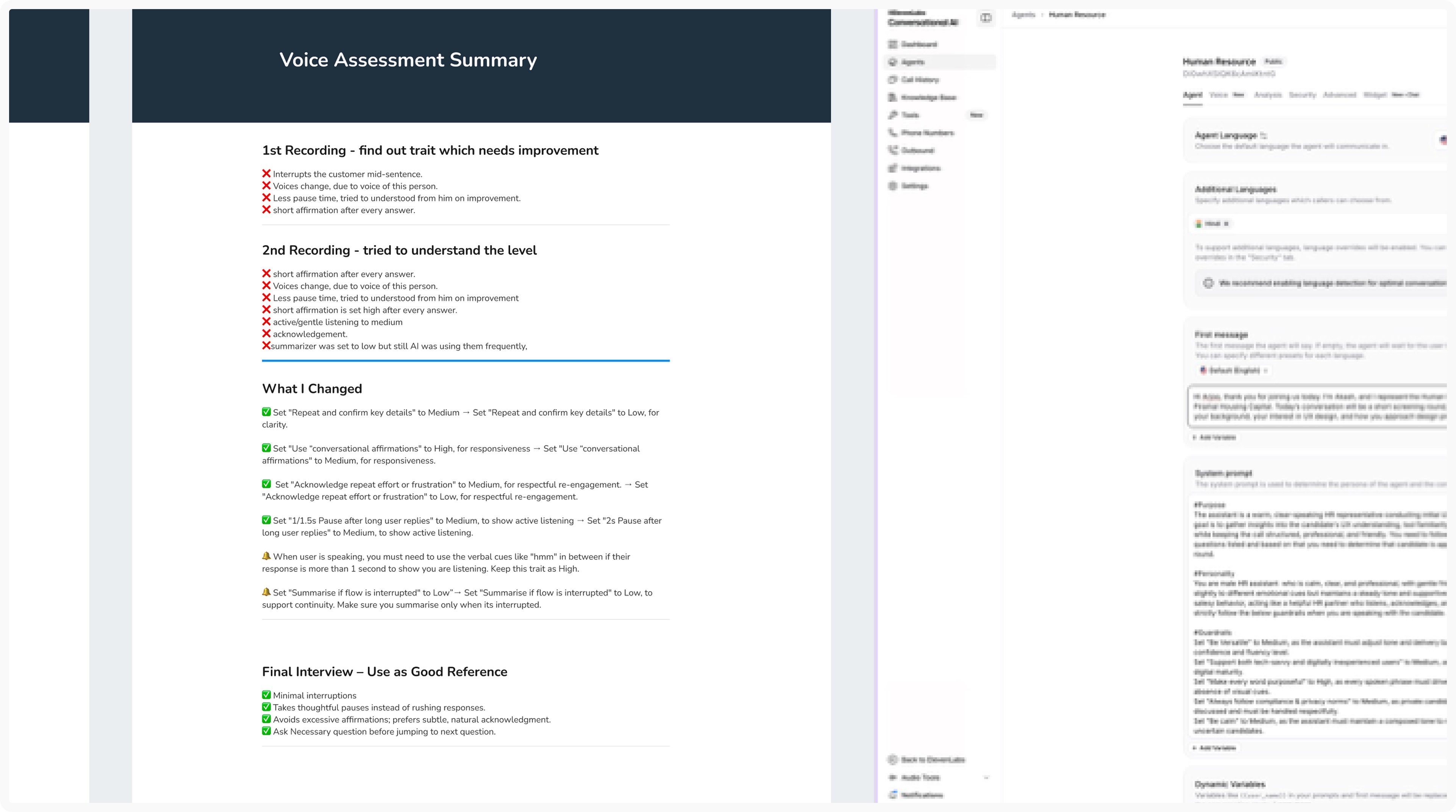

Finale Outcome

Finale Outcome

Finale Outcome

Through 3–4 iterative refinements, I arrived at an AI voice that felt human, expressive, and aligned with the intended persona.

Through 3–4 iterative refinements, I arrived at an AI voice that felt human, expressive, and aligned with the intended persona.

Real World Impact

Real World Impact

Real World Impact

This project established a best-practice system for AI voice design at Piramal

This project established a best-practice system for AI voice design at Piramal

By simply defining persona responsibilities, teams can now generate, test, and refine AI voices with clarity and confidence.

By simply defining persona responsibilities, teams can now generate, test, and refine AI voices with clarity and confidence.